Do these techniques revolve round key phrase analysis, meta descriptions, and backlinks?

In that case, you’re not alone. In relation to Search engine optimization, these methods are often the primary ones entrepreneurs add to their arsenal.

Whereas these methods do enhance your website’s visibility in natural search, they’re not the one ones you need to be using. There’s one other set of techniques that fall below the Search engine optimization umbrella.

Technical Search engine optimization refers back to the behind-the-scenes components that energy your natural progress engine, akin to website structure, cell optimization, and web page velocity. These features of Search engine optimization may not be the sexiest, however they’re extremely vital.

Step one in bettering your technical Search engine optimization is figuring out the place you stand by performing a website audit. The second step is to create a plan to handle the areas the place you fall brief. We’ll cowl these steps in-depth under.

Professional tip: Create a web site designed to transform utilizing HubSpot’s free CMS instruments.

What’s technical Search engine optimization?

Technical Search engine optimization refers to something you try this makes your website simpler for search engines like google and yahoo to crawl and index. Technical Search engine optimization, content material technique, and link-building methods all work in tandem to assist your pages rank extremely in search.

Technical Search engine optimization vs. On-Web page Search engine optimization vs. Off-Web page Search engine optimization

Many individuals break down search engine marketing (Search engine optimization) into three totally different buckets: on-page Search engine optimization, off-page Search engine optimization, and technical Search engine optimization. Let’s rapidly cowl what every means.

On-Web page Search engine optimization

On-page Search engine optimization refers back to the content material that tells search engines like google and yahoo (and readers!) what your web page is about, together with picture alt textual content, key phrase utilization, meta descriptions, H1 tags, URL naming, and inside linking. You’ve got essentially the most management over on-page Search engine optimization as a result of, properly, every part is on your website.

Off-Web page Search engine optimization

Off-page Search engine optimization tells search engines like google and yahoo how well-liked and helpful your web page is thru votes of confidence — most notably backlinks, or hyperlinks from different websites to your individual. Backlink amount and high quality enhance a web page’s PageRank. All issues being equal, a web page with 100 related hyperlinks from credible websites will outrank a web page with 50 related hyperlinks from credible websites (or 100 irrelevant hyperlinks from credible websites.)

Technical Search engine optimization

Technical Search engine optimization is inside your management as properly, however it’s a bit trickier to grasp because it’s much less intuitive.

Why is technical Search engine optimization vital?

Chances are you’ll be tempted to disregard this element of Search engine optimization utterly; nevertheless, it performs an vital position in your natural visitors. Your content material is perhaps essentially the most thorough, helpful, and well-written, however until a search engine can crawl it, only a few individuals will ever see it.

It’s like a tree that falls within the forest when nobody is round to listen to it … does it make a sound? And not using a robust technical Search engine optimization basis, your content material will make no sound to search engines like google and yahoo.

Supply

Supply

Let’s focus on how one can make your content material resound by means of the web.

Understanding Technical Search engine optimization

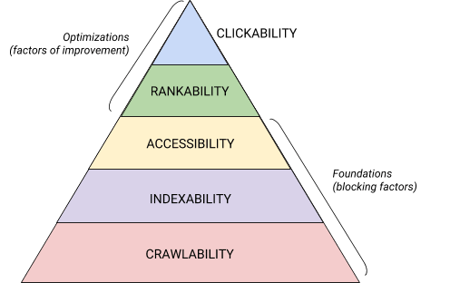

Technical Search engine optimization is a beast that’s finest damaged down into digestible items. When you’re like me, you prefer to deal with large issues in chunks and with checklists. Imagine it or not, every part we’ve coated thus far may be positioned into one in all 5 classes, every of which deserves its personal record of actionable objects.

These 5 classes and their place within the technical Search engine optimization hierarchy is finest illustrated by this lovely graphic that’s paying homage to Maslov’s Hierarchy of Wants however remixed for search engine marketing. (Notice that we are going to use the generally used time period “Rendering” rather than Accessibility.)

Supply

Supply

Technical Search engine optimization Audit Fundamentals

Earlier than you start together with your technical Search engine optimization audit, there are just a few fundamentals that you have to put in place.

Let’s cowl these technical Search engine optimization fundamentals earlier than we transfer on to the remainder of your web site audit.

Audit Your Most popular Area

Your area is the URL that individuals kind to reach in your website, like hubspot.com. Your web site area impacts whether or not individuals can discover you thru search and offers a constant approach to determine your website.

When you choose a most popular area, you’re telling search engines like google and yahoo whether or not you like the www or non-www model of your website to be displayed within the search outcomes. For instance, you would possibly choose www.yourwebsite.com over yourwebsite.com. This tells search engines like google and yahoo to prioritize the www model of your website and redirects all customers to that URL. In any other case, search engines like google and yahoo will deal with these two variations as separate websites, leading to dispersed Search engine optimization worth.

Beforehand, Google requested you to determine the model of your URL that you simply choose. Now, Google will determine and choose a model to point out searchers for you. Nevertheless, when you choose to set the popular model of your area, then you are able to do so by means of canonical tags (which we’ll cowl shortly). Both method, when you set your most popular area, make it possible for all variants, that means www, non-www, http, and index.html, all completely redirect to that model.

Implement SSL

You might have heard this time period earlier than — that’s as a result of it’s fairly vital. SSL, or Safe Sockets Layer, creates a layer of safety between the online server (the software program chargeable for fulfilling a web based request) and a browser, thereby making your website safe. When a consumer sends data to your web site, like fee or contact data, that data is much less more likely to be hacked as a result of you might have SSL to guard them.

An SSL certificates is denoted by a website that begins with “https://” versus “http://” and a lock image within the URL bar.

Search engines like google and yahoo prioritize safe websites — actually, Google introduced as early as 2014 that SSL can be thought of a rating issue. Due to this, make sure to set the SSL variant of your homepage as your most popular area.

After you arrange SSL, you’ll have to migrate any non-SSL pages from http to https. It’s a tall order, however definitely worth the effort within the identify of improved rating. Listed below are the steps you have to take:

- Redirect all http://yourwebsite.com pages to https://yourwebsite.com.

- Replace all canonical and hreflang tags accordingly.

- Replace the URLs in your sitemap (positioned at yourwebsite.com/sitemap.xml) and your robotic.txt (positioned at yourwebsite.com/robots.txt).

- Arrange a brand new occasion of Google Search Console and Bing Webmaster Instruments to your https web site and observe it to verify 100% of the visitors migrates over.

Optimize Web page Velocity

Are you aware how lengthy a web site customer will wait to your web site to load? Six seconds … and that’s being beneficiant. Some knowledge reveals that the bounce price will increase by 90% with a rise in web page load time from one to 5 seconds. You don’t have one second to waste, so bettering your website load time needs to be a precedence.

Website velocity isn’t simply vital for consumer expertise and conversion — it’s additionally a rating issue.

Use the following pointers to enhance your common web page load time:

- Compress your entire recordsdata. Compression reduces the scale of your pictures, in addition to CSS, HTML, and JavaScript recordsdata, in order that they take up much less house and cargo sooner.

- Audit redirects often. A 301 redirect takes just a few seconds to course of. Multiply that over a number of pages or layers of redirects, and also you’ll significantly influence your website velocity.

- Trim down your code. Messy code can negatively influence your website velocity. Messy code means code that is lazy. It is like writing — perhaps within the first draft, you make your level in 6 sentences. Within the second draft, you make it in 3. The extra environment friendly code is, the extra rapidly the web page will load (generally). When you clear issues up, you’ll minify and compress your code.

- Think about a content material distribution community (CDN). CDNs are distributed net servers that retailer copies of your web site in numerous geographical areas and ship your website primarily based on the searcher’s location. For the reason that data between servers has a shorter distance to journey, your website masses sooner for the requesting get together.

- Strive to not go plugin joyful. Outdated plugins usually have safety vulnerabilities that make your web site prone to malicious hackers who can hurt your web site’s rankings. Ensure you’re all the time utilizing the newest variations of plugins and reduce your use to essentially the most important. In the identical vein, think about using custom-made themes, as pre-made web site themes usually include plenty of pointless code.

- Make the most of cache plugins. Cache plugins retailer a static model of your website to ship to returning customers, thereby lowering the time to load the positioning throughout repeat visits.

- Use asynchronous (async) loading. Scripts are directions that servers have to learn earlier than they’ll course of the HTML, or physique, of your webpage, i.e. the issues guests wish to see in your website. Usually, scripts are positioned within the <head> of a web site (assume: your Google Tag Supervisor script), the place they’re prioritized over the content material on the remainder of the web page. Utilizing async code means the server can course of the HTML and script concurrently, thereby lowering the delay and rising web page load time.

Right here’s how an async script seems to be: <script async src=”script.js“></script>

If you wish to see the place your web site falls brief within the velocity division, you need to use this useful resource from Google.

After you have your technical Search engine optimization fundamentals in place, you are prepared to maneuver onto the following stage — crawlability.

Crawlability Guidelines

Crawlability is the inspiration of your technical Search engine optimization technique. Search bots will crawl your pages to assemble details about your website.

If these bots are one way or the other blocked from crawling, they’ll’t index or rank your pages. Step one to implementing technical Search engine optimization is to make sure that your entire vital pages are accessible and straightforward to navigate.

Beneath we’ll cowl some objects so as to add to your guidelines in addition to some web site components to audit to make sure that your pages are prime for crawling.

Crawlability Guidelines

- Create an XML sitemap.

- Maximize your crawl price range.

- Optimize your website structure.

- Set a URL construction.

- Make the most of robots.txt.

- Add breadcrumb menus.

- Use pagination.

- Examine your Search engine optimization log recordsdata.

1. Create an XML sitemap.

Keep in mind that website construction we went over? That belongs in one thing known as an XML Sitemap that helps search bots perceive and crawl your net pages. You’ll be able to consider it as a map to your web site. You’ll submit your sitemap to Google Search Console and Bing Webmaster Instruments as soon as it’s full. Keep in mind to maintain your sitemap up-to-date as you add and take away net pages.

2. Maximize your crawl price range.

Your crawl price range refers back to the pages and sources in your website search bots will crawl.

As a result of crawl price range isn’t infinite, be sure to’re prioritizing your most vital pages for crawling.

Listed below are just a few ideas to make sure that you’re maximizing your crawl price range:

- Take away or canonicalize duplicate pages.

- Repair or redirect any damaged hyperlinks.

- Be certain your CSS and Javascript recordsdata are crawlable.

- Examine your crawl stats often and look ahead to sudden dips or will increase.

- Be certain any bot or web page you’ve disallowed from crawling is supposed to be blocked.

- Hold your sitemap up to date and submit it to the suitable webmaster instruments.

- Prune your website of pointless or outdated content material.

- Be careful for dynamically generated URLs, which may make the variety of pages in your website skyrocket.

3. Optimize your website structure.

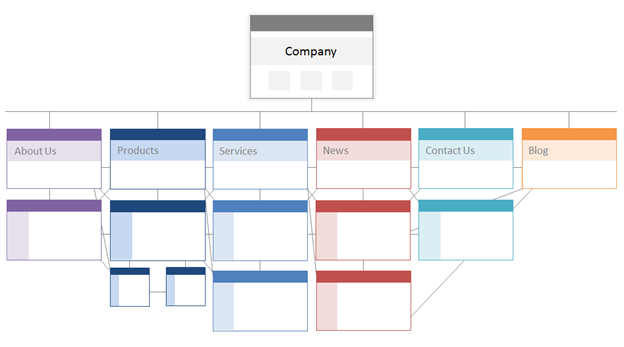

Your web site has a number of pages. These pages should be organized in a method that permits search engines like google and yahoo to simply discover and crawl them. That’s the place your website construction — also known as your web site’s data structure — is available in.

In the identical method {that a} constructing is predicated on architectural design, your website structure is the way you arrange the pages in your website.

Associated pages are grouped collectively; for instance, your weblog homepage hyperlinks to particular person weblog posts, which every hyperlink to their respective writer pages. This construction helps search bots perceive the connection between your pages.

Your website structure also needs to form, and be formed by, the significance of particular person pages. The nearer Web page A is to your homepage, the extra pages hyperlink to Web page A, and the extra hyperlink fairness these pages have, the extra significance search engines like google and yahoo will give to Web page A.

For instance, a hyperlink out of your homepage to Web page A demonstrates extra significance than a hyperlink from a weblog submit. The extra hyperlinks to Web page A, the extra “vital” that web page turns into to search engines like google and yahoo.

Conceptually, a website structure might look one thing like this, the place the About, Product, Information, and so forth. pages are positioned on the high of the hierarchy of web page significance.

Supply

Be certain an important pages to your online business are on the high of the hierarchy with the best variety of (related!) inside hyperlinks.

4. Set a URL construction.

URL construction refers to the way you construction your URLs, which could possibly be decided by your website structure. I’ll clarify the connection in a second. First, let’s make clear that URLs can have subdirectories, like weblog.hubspot.com, and/or subfolders, like hubspot.com/weblog, that point out the place the URL leads.

For instance, a weblog submit titled Groom Your Canine would fall below a weblog subdomain or subdirectory. The URL is perhaps https://ift.tt/tf6SveD. Whereas a product web page on that very same website can be https://ift.tt/3hcUfDt.

Whether or not you employ subdomains or subdirectories or “merchandise” versus “retailer” in your URL is completely as much as you. The great thing about creating your individual web site is you could create the foundations. What’s vital is that these guidelines observe a unified construction, that means that you simply shouldn’t swap between weblog.yourwebsite.com and yourwebsite.com/blogs on totally different pages. Create a roadmap, apply it to your URL naming construction, and persist with it.

Listed below are just a few extra recommendations on write your URLs:

- Use lowercase characters.

- Use dashes to separate phrases.

- Make them brief and descriptive.

- Keep away from utilizing pointless characters or phrases (together with prepositions).

- Embody your goal key phrases.

After you have your URL construction buttoned up, you’ll submit a listing of URLs of your vital pages to search engines like google and yahoo within the type of an XML sitemap. Doing so offers search bots extra context about your website in order that they don’t should determine it out as they crawl.

5. Make the most of robots.txt.

When an online robotic crawls your website, it can first examine the /robotic.txt, in any other case generally known as the Robotic Exclusion Protocol. This protocol can permit or disallow particular net robots to crawl your website, together with particular sections and even pages of your website. When you’d like to stop bots from indexing your website, you’ll use a noindex robots meta tag. Let’s focus on each of those situations.

Chances are you’ll wish to block sure bots from crawling your website altogether. Sadly, there are some bots on the market with malicious intent — bots that can scrape your content material or spam your neighborhood boards. When you discover this dangerous habits, you’ll use your robotic.txt to stop them from getting into your web site. On this state of affairs, you may consider robotic.txt as your drive subject from dangerous bots on the web.

Relating to indexing, search bots crawl your website to assemble clues and discover key phrases to allow them to match your net pages with related search queries. However, as we’ll focus on later, you might have a crawl price range that you simply don’t wish to spend on pointless knowledge. So, chances are you’ll wish to exclude pages that don’t assist search bots perceive what your web site is about, for instance, a Thank You web page from a proposal or a login web page.

It doesn’t matter what, your robotic.txt protocol will likely be distinctive relying on what you’d like to perform.

6. Add breadcrumb menus.

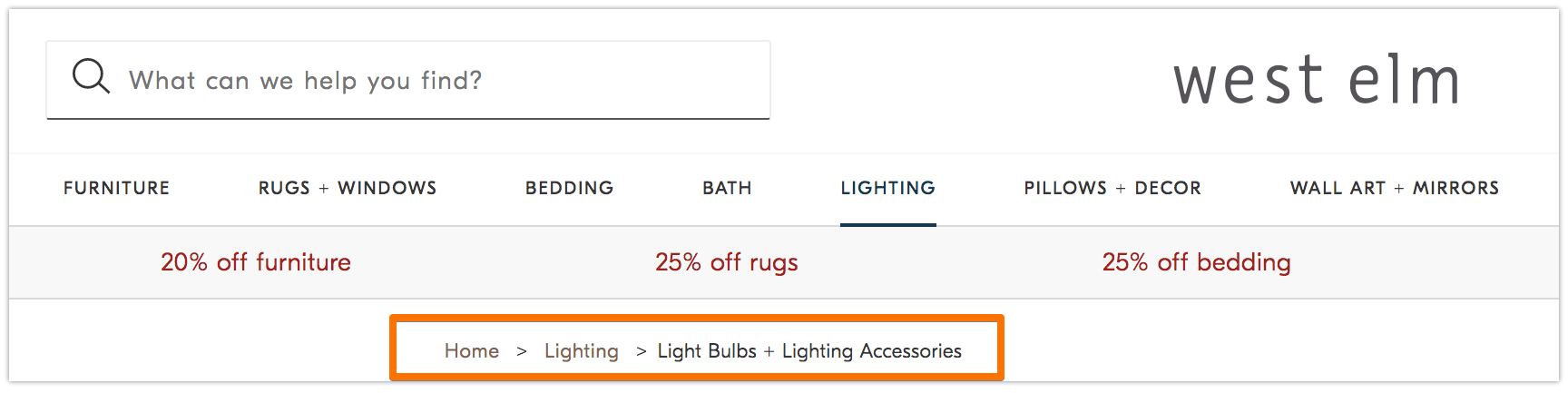

Keep in mind the previous fable Hansel and Gretel the place two kids dropped breadcrumbs on the bottom to search out their method again dwelling? Properly, they have been on to one thing.

Breadcrumbs are precisely what they sound like — a path that guides customers to again to the beginning of their journey in your web site. It’s a menu of pages that tells customers how their present web page pertains to the remainder of the positioning.

And so they aren’t only for web site guests; search bots use them, too.

Supply

Breadcrumbs needs to be two issues: 1) seen to customers to allow them to simply navigate your net pages with out utilizing the Again button, and a couple of) have structured markup language to provide correct context to go looking bots which are crawling your website.

Unsure add structured knowledge to your breadcrumbs? Use this information for BreadcrumbList.

7. Use pagination.

Keep in mind when academics would require you to quantity the pages in your analysis paper? That’s known as pagination. On this planet of technical Search engine optimization, pagination has a barely totally different position however you may nonetheless consider it as a type of group.

Pagination makes use of code to inform search engines like google and yahoo when pages with distinct URLs are associated to one another. For example, you will have a content material sequence that you simply break up into chapters or a number of webpages. If you wish to make it straightforward for search bots to find and crawl these pages, then you definately’ll use pagination.

The best way it really works is fairly easy. You’ll go to the <head> of web page one of many sequence and use

rel=”subsequent” to inform the search bot which web page to crawl second. Then, on web page two, you’ll use rel=”prev” to point the prior web page and rel=”subsequent” to point the following web page, and so forth.

It seems to be like this…

On web page one:

<hyperlink rel=“subsequent” href=“https://www.web site.com/page-two” />

On web page two:

<hyperlink rel=“prev” href=“https://www.web site.com/page-one” />

<hyperlink rel=“subsequent” href=“https://www.web site.com/page-three” />

Notice that pagination is helpful for crawl discovery, however is not supported by Google to batch index pages because it as soon as was.

8. Examine your Search engine optimization log recordsdata.

You’ll be able to consider log recordsdata like a journal entry. Net servers (the journaler) file and retailer log knowledge about each motion they take in your website in log recordsdata (the journal). The information recorded contains the time and date of the request, the content material requested, and the requesting IP handle. You can too determine the consumer agent, which is a uniquely identifiable software program (like a search bot, for instance) that fulfills the request for a consumer.

However what does this should do with Search engine optimization?

Properly, search bots go away a path within the type of log recordsdata once they crawl your website. You’ll be able to decide if, when, and what was crawled by checking the log recordsdata and filtering by the consumer agent and search engine.

This data is helpful to you as a result of you may decide how your crawl price range is spent and which limitations to indexing or entry a bot is experiencing. To entry your log recordsdata, you may both ask a developer or use a log file analyzer, like Screaming Frog.

Simply because a search bot can crawl your website doesn’t essentially imply that it may index your entire pages. Let’s check out the following layer of your technical Search engine optimization audit — indexability.

Indexability Guidelines

As search bots crawl your web site, they start indexing pages primarily based on their matter and relevance to that matter. As soon as listed, your web page is eligible to rank on the SERPs. Listed below are just a few elements that may assist your pages get listed.

Indexability Guidelines

- Unblock search bots from accessing pages.

- Take away duplicate content material.

- Audit your redirects.

- Examine the mobile-responsiveness of your website.

- Repair HTTP errors.

1. Unblock search bots from accessing pages.

You’ll doubtless handle this step when addressing crawlability, however it’s value mentioning right here. You wish to make sure that bots are despatched to your most popular pages and that they’ll entry them freely. You’ve got just a few instruments at your disposal to do that. Google’s robots.txt tester provides you with a listing of pages which are disallowed and you need to use the Google Search Console’s Examine instrument to find out the reason for blocked pages.

2. Take away duplicate content material.

Duplicate content material confuses search bots and negatively impacts your indexability. Keep in mind to make use of canonical URLs to ascertain your most popular pages.

3. Audit your redirects.

Confirm that your entire redirects are arrange correctly. Redirect loops, damaged URLs, or — worse — improper redirects may cause points when your website is being listed. To keep away from this, audit your entire redirects often.

4. Examine the mobile-responsiveness of your website.

In case your web site shouldn’t be mobile-friendly by now, then you definately’re far behind the place you have to be. As early as 2016, Google began indexing cell websites first, prioritizing the cell expertise over desktop. At present, that indexing is enabled by default. To maintain up with this vital pattern, you need to use Google’s mobile-friendly check to examine the place your web site wants to enhance.

5. Repair HTTP errors.

HTTP stands for HyperText Switch Protocol, however you most likely don’t care about that. What you do care about is when HTTP returns errors to your customers or to search engines like google and yahoo, and repair them.

HTTP errors can impede the work of search bots by blocking them from vital content material in your website. It’s, due to this fact, extremely vital to handle these errors rapidly and totally.

Since each HTTP error is exclusive and requires a particular decision, the part under has a short rationalization of every, and also you’ll use the hyperlinks offered to be taught extra about or resolve them.

- 301 Everlasting Redirects are used to completely ship visitors from one URL to a different. Your CMS will permit you to arrange these redirects, however too many of those can decelerate your website and degrade your consumer expertise as every extra redirect provides to web page load time. Goal for zero redirect chains, if potential, as too many will trigger search engines like google and yahoo to surrender crawling that web page.

- 302 Short-term Redirect is a approach to briefly redirect visitors from a URL to a unique webpage. Whereas this standing code will robotically ship customers to the brand new webpage, the cached title tag, URL, and outline will stay in step with the origin URL. If the non permanent redirect stays in place lengthy sufficient, although, it can finally be handled as a everlasting redirect and people components will move to the vacation spot URL.

- 403 Forbidden Messages imply that the content material a consumer has requested is restricted primarily based on entry permissions or on account of a server misconfiguration.

- 404 Error Pages inform customers that the web page they’ve requested doesn’t exist, both as a result of it’s been eliminated or they typed the mistaken URL. It’s all the time a good suggestion to create 404 pages which are on-brand and fascinating to maintain guests in your website (click on the hyperlink above to see some good examples).

- 405 Technique Not Allowed implies that your web site server acknowledged and nonetheless blocked the entry technique, leading to an error message.

- 500 Inside Server Error is a normal error message which means your net server is experiencing points delivering your website to the requesting get together.

- 502 Dangerous Gateway Error is expounded to miscommunication, or invalid response, between web site servers.

- 503 Service Unavailable tells you that whereas your server is functioning correctly, it’s unable to satisfy the request.

- 504 Gateway Timeout means a server didn’t obtain a well timed response out of your net server to entry the requested data.

Regardless of the motive for these errors, it’s vital to handle them to maintain each customers and search engines like google and yahoo joyful, and to maintain each coming again to your website.

Even when your website has been crawled and listed, accessibility points that block customers and bots will influence your Search engine optimization. That mentioned, we have to transfer on to the following stage of your technical Search engine optimization audit — renderability.

Renderability Guidelines

Earlier than we dive into this matter, it’s vital to notice the distinction between Search engine optimization accessibility and net accessibility. The latter revolves round making your net pages straightforward to navigate for customers with disabilities or impairments, like blindness or Dyslexia, for instance. Many components of on-line accessibility overlap with Search engine optimization finest practices. Nevertheless, an Search engine optimization accessibility audit doesn’t account for every part you’d have to do to make your website extra accessible to guests who’re disabled.

We’re going to give attention to Search engine optimization accessibility, or rendering, on this part, however maintain net accessibility high of thoughts as you develop and preserve your website.

Renderability Guidelines

An accessible website is predicated on ease of rendering. Beneath are the web site components to evaluate to your renderability audit.

Server Efficiency

As you discovered above, server timeouts and errors will trigger HTTP errors that hinder customers and bots from accessing your website. When you discover that your server is experiencing points, use the sources offered above to troubleshoot and resolve them. Failure to take action in a well timed method can lead to search engines like google and yahoo eradicating your net web page from their index as it’s a poor expertise to point out a damaged web page to a consumer.

HTTP Standing

Much like server efficiency, HTTP errors will stop entry to your webpages. You should use an online crawler, like Screaming Frog, Botify, or DeepCrawl to carry out a complete error audit of your website.

Load Time and Web page Dimension

In case your web page takes too lengthy to load, the bounce price shouldn’t be the one downside you need to fear about. A delay in web page load time can lead to a server error that can block bots out of your webpages or have them crawl partially loaded variations which are lacking vital sections of content material. Relying on how a lot crawl demand there’s for a given useful resource, bots will spend an equal quantity of sources to try to load, render, and index pages. Nevertheless, you need to do every part in your management to lower your web page load time.

JavaScript Rendering

Google admittedly has a tough time processing JavaScript (JS) and, due to this fact, recommends using pre-rendered content material to enhance accessibility. Google additionally has a host of sources that will help you perceive how search bots entry JS in your website and enhance search-related points.

Orphan Pages

Each web page in your website needs to be linked to not less than one different web page — ideally extra, relying on how vital the web page is. When a web page has no inside hyperlinks, it’s known as an orphan web page. Like an article with no introduction, these pages lack the context that bots want to grasp how they need to be listed.

Web page Depth

Web page depth refers to what number of layers down a web page exists in your website construction, i.e. what number of clicks away out of your homepage it’s. It’s finest to maintain your website structure as shallow as potential whereas nonetheless sustaining an intuitive hierarchy. Generally a multi-layered website is inevitable; in that case, you’ll wish to prioritize a well-organized website over shallowness.

No matter what number of layers in your website construction, maintain vital pages — like your product and get in touch with pages — not more than three clicks deep. A construction that buries your product web page so deep in your website that customers and bots have to play detective to search out them are much less accessible and supply a poor expertise

For instance, a web site URL like this that guides your target market to your product web page is an instance of a poorly deliberate website construction: https://ift.tt/GM4eazy.

Redirect Chains

Whenever you resolve to redirect visitors from one web page to a different, you’re paying a worth. That worth is crawl effectivity. Redirects can decelerate crawling, scale back web page load time, and render your website inaccessible if these redirects aren’t arrange correctly. For all of those causes, attempt to maintain redirects to a minimal.

As soon as you’ve got addressed accessibility points, you may transfer onto how your pages rank within the SERPs.

Rankability Guidelines

Now we transfer to the extra topical components that you simply’re most likely already conscious of — enhance rating from a technical Search engine optimization standpoint. Getting your pages to rank includes a number of the on-page and off-page components that we talked about earlier than however from a technical lens.

Keep in mind that all of those components work collectively to create an Search engine optimization-friendly website. So, we’d be remiss to depart out all of the contributing elements. Let’s dive into it.

Inside and Exterior Linking

Hyperlinks assist search bots perceive the place a web page suits within the grand scheme of a question and provides context for rank that web page. Hyperlinks information search bots (and customers) to associated content material and switch web page significance. General, linking improves crawling, indexing, and your capability to rank.

Backlink High quality

Backlinks — hyperlinks from different websites again to your individual — present a vote of confidence to your website. They inform search bots that Exterior Web site A believes your web page is high-quality and value crawling. As these votes add up, search bots discover and deal with your website as extra credible. Appears like an ideal deal proper? Nevertheless, as with most nice issues, there’s a caveat. The standard of these backlinks matter, lots.

Hyperlinks from low-quality websites can really damage your rankings. There are various methods to get high quality backlinks to your website, like outreach to related publications, claiming unlinked mentions, offering related publications, claiming unlinked mentions, and offering useful content material that different websites wish to hyperlink to.

Content material Clusters

We at HubSpot haven’t been shy about our love for content material clusters or how they contribute to natural progress. Content material clusters hyperlink associated content material so search bots can simply discover, crawl, and index the entire pages you personal on a selected matter. They act as a self-promotion instrument to point out search engines like google and yahoo how a lot a couple of matter, so they’re extra more likely to rank your website as an authority for any associated search question.

Your rankability is the primary determinant in natural visitors progress as a result of research present that searchers are extra more likely to click on on the highest three search outcomes on SERPs. However how do you make sure that yours is the outcome that will get clicked?

Let’s spherical this out with the ultimate piece to the natural visitors pyramid: clickability.

Clickability Guidelines

Whereas click-through price (CTR) has every part to do with searcher habits, there are issues you can do to enhance your clickability on the SERPs. Whereas meta descriptions and web page titles with key phrases do influence CTR, we’re going to give attention to the technical components as a result of that’s why you’re right here.

Clickability Guidelines

- Use structured knowledge.

- Win SERP options.

- Optimize for Featured Snippets.

- Think about Google Uncover.

Rating and click-through price go hand-in-hand as a result of, let’s be sincere, searchers need instant solutions. The extra your outcome stands out on the SERP, the extra doubtless you’ll get the press. Let’s go over just a few methods to enhance your clickability.

1. Use structured knowledge.

Structured knowledge employs a particular vocabulary known as schema to categorize and label components in your webpage for search bots. The schema makes it crystal clear what every factor is, the way it pertains to your website, and interpret it. Principally, structured knowledge tells bots, “This can be a video,” “This can be a product,” or “This can be a recipe,” leaving no room for interpretation.

To be clear, utilizing structured knowledge shouldn’t be a “clickability issue” (if there even is such a factor), however it does assist arrange your content material in a method that makes it straightforward for search bots to grasp, index, and probably rank your pages.

2. Win SERP options.

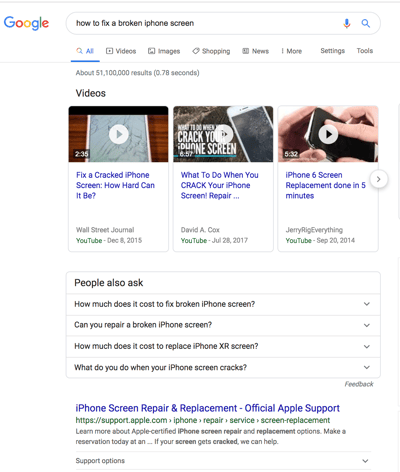

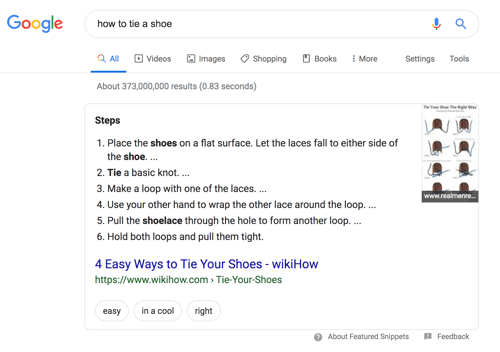

SERP options, in any other case generally known as wealthy outcomes, are a double-edged sword. When you win them and get the click-through, you’re golden. If not, your natural outcomes are pushed down the web page beneath sponsored adverts, textual content reply packing containers, video carousels, and the like.

Wealthy outcomes are these components that don’t observe the web page title, URL, meta description format of different search outcomes. For instance, the picture under reveals two SERP options — a video carousel and “Individuals Additionally Ask” field — above the primary natural outcome.

Whilst you can nonetheless get clicks from showing within the high natural outcomes, your likelihood is drastically improved with wealthy outcomes.

How do you enhance your probabilities of incomes wealthy outcomes? Write helpful content material and use structured knowledge. The better it’s for search bots to grasp the weather of your website, the higher your probabilities of getting a wealthy outcome.

Structured knowledge is helpful for getting these (and different search gallery components) out of your website to the highest of the SERPs, thereby, rising the chance of a click-through:

- Articles

- Movies

- Evaluations

- Occasions

- How-Tos

- FAQs (“Individuals Additionally Ask” packing containers)

- Photos

- Native Enterprise Listings

- Merchandise

- Sitelinks

3. Optimize for Featured Snippets.

One unicorn SERP characteristic that has nothing to do with schema markup is Featured Snippets, these packing containers above the search outcomes that present concise solutions to go looking queries.

Featured Snippets are meant to get searchers the solutions to their queries as rapidly as potential. In line with Google, offering one of the best reply to the searcher’s question is the one approach to win a snippet. Nevertheless, HubSpot’s analysis revealed just a few extra methods to optimize your content material for featured snippets.

4. Think about Google Uncover.

Google Uncover is a comparatively new algorithmic itemizing of content material by class particularly for cell customers. It’s no secret that Google has been doubling down on the cell expertise; with over 50% of searches coming from cell, it’s no shock both. The instrument permits customers to construct a library of content material by deciding on classes of curiosity (assume: gardening, music, or politics).

At HubSpot, we consider matter clustering can enhance the chance of Google Uncover inclusion and are actively monitoring our Google Uncover visitors in Google Search Console to find out the validity of that speculation. We suggest that you simply additionally make investments a while in researching this new characteristic. The payoff is a extremely engaged consumer base that has mainly hand-selected the content material you’ve labored onerous to create.

The Excellent Trio

Technical Search engine optimization, on-page Search engine optimization, and off-page Search engine optimization work collectively to unlock the door to natural visitors. Whereas on-page and off-page methods are sometimes the primary to be deployed, technical Search engine optimization performs a crucial position in getting your website to the highest of the search outcomes and your content material in entrance of your ideally suited viewers. Use these technical techniques to spherical out your Search engine optimization technique and watch the outcomes unfold.

from Digital Marketing – My Blog https://ift.tt/uZD3FWQ

via IFTTT

No comments:

Post a Comment